Example: pandas

This guide walks through running pandas (~170k tests, Cython extensions) under DAGZ.

The example is Docker-based: a single image bakes pandas, dagz, and Postgres + MariaDB so the -m db slice runs without any external services.

The pytest plugin is unsupported on macOS, so Docker is the only supported way to run pandas under DAGZ on a Mac; on Linux the same image is a clean way to keep the build environment isolated from the host.

Prerequisites

Install the zb runtime and start the local daemon on the host. The container will reach the daemon over the Docker bridge:

curl -LsSf https://dagz.run/install.sh | bash

zb env local up --bg

For containers to reach the host daemon, enable listen_on_docker_bridge in ~/.dagz/local.env/daemon.yaml (see Getting Started: Start the local environment).

Build the image

Save the Dockerfile below and build it:

# syntax=docker/dockerfile:1

FROM python:3.12-slim-bookworm

RUN apt-get update && apt-get install -y --no-install-recommends \

postgresql mariadb-server \

build-essential ninja-build curl git \

libgl1 libglib2.0-0 \

&& rm -rf /var/lib/apt/lists/*

# Init PostgreSQL (password + database)

RUN pg_ctlcluster 15 main start \

&& su postgres -c "psql -c \"ALTER USER postgres WITH PASSWORD 'postgres'\"" \

&& su postgres -c "createdb pandas" \

&& pg_ctlcluster 15 main stop

# Init MariaDB (TCP root access for pymysql + database). Pandas's fixtures

# connect to host=localhost; reverse DNS in the container resolves the

# client IP back to 'localhost', so we grant on both 127.0.0.1 and localhost.

RUN mysqld_safe & \

while ! mysqladmin ping --silent 2>/dev/null; do sleep 0.1; done \

&& mysql -e "\

DROP USER IF EXISTS 'root'@'localhost'; \

CREATE USER 'root'@'localhost' IDENTIFIED VIA mysql_native_password USING ''; \

CREATE USER IF NOT EXISTS 'root'@'127.0.0.1' IDENTIFIED VIA mysql_native_password USING ''; \

CREATE USER IF NOT EXISTS 'root'@'%' IDENTIFIED VIA mysql_native_password USING ''; \

GRANT ALL ON *.* TO 'root'@'127.0.0.1' WITH GRANT OPTION; \

GRANT ALL ON *.* TO 'root'@'localhost' WITH GRANT OPTION; \

GRANT ALL ON *.* TO 'root'@'%' WITH GRANT OPTION; \

CREATE DATABASE IF NOT EXISTS pandas; \

FLUSH PRIVILEGES" \

&& mysqladmin shutdown

RUN curl -LsSf https://astral.sh/uv/install.sh | sh

ENV PATH="/root/.local/bin:$PATH"

# Clone pandas tip, install deps + editable build

RUN git clone https://github.com/pandas-dev/pandas.git /pandas

WORKDIR /pandas

RUN uv venv /venv --seed --python 3.12

ENV VIRTUAL_ENV=/venv PATH="/venv/bin:$PATH"

RUN uv pip install -r requirements-dev.txt

RUN uv pip install -v -e . --no-build-isolation

RUN git config --global --add safe.directory /pandas

# pandas's meson-python editable install registers a custom MetaPathFinder

# instead of adding /pandas to sys.path. DAGZ resolves test paths to module

# names via sys.path, so put /pandas on PYTHONPATH explicitly.

ENV PYTHONPATH=/pandas

# Pre-install dagz-pytest's runtime deps so first container start is fast.

RUN uv pip install dagz

# Install the zb CLI (lands in /root/.local/bin, already on PATH).

RUN curl -LsSf https://dagz.run/install.sh | bash

COPY <<'EOF' /entrypoint.sh

#!/bin/bash

set -e

pg_ctlcluster 15 main start

mysqld_safe &

while ! mysqladmin ping --silent 2>/dev/null; do sleep 0.1; done

exec "$@"

EOF

RUN chmod +x /entrypoint.sh

ENV PATH=$PATH:/venv/bin

ENTRYPOINT ["/entrypoint.sh"]

CMD ["bash"]

docker build -t dagz-pandas-demo:latest .

The image clones pandas at main, sets up a venv, installs dagz, and pre-initializes Postgres + MariaDB.

The entrypoint starts both databases before any user command.

Clone pandas on the host

Clone pandas on the host so you can edit it with your editor, run git commands, and have changes visible inside the container.

The image bakes its own clone at /pandas, but the bind mount in the next step replaces it — without a host clone there's nothing to mount.

git clone https://github.com/pandas-dev/pandas.git

cd pandas

Drop dagz_pandas_integ.py and the conftest snippet from Parallelizing DB tests into this directory if you plan to run the -m db slice.

Run the container

Bind-mount your pandas checkout at /pandas so edits and git reset --hard on the host are visible inside the container:

docker run -it --rm \

--env DAGZ_URL=http://host.docker.internal:29111 \

--add-host=host.docker.internal:host-gateway \ # Linux only; auto on macOS

-v "${PWD}:/pandas" \

dagz-pandas-demo:latest \

bash

Inside the container, do a one-time editable install of the bind-mounted pandas.

--config-settings=builddir=/build tells meson to put compiled artifacts in /build (container fs) instead of inside /pandas, which would pollute the host checkout:

uv pip install -v -e . --config-settings=builddir=/build --no-build-isolation

The first build compiles all C/Cython extensions and takes a few minutes. Subsequent invocations reuse /build and are fast.

Run tests with DAGZ

Inside the container, the first run records the baseline:

pytest --dagz pandas/tests/

Subsequent runs select only the tests affected by your changes:

pytest --dagz pandas/tests/

What to expect

pandas has roughly 170,000 tests. On first run, DAGZ records function-level coverage for each test. On subsequent runs, it compares your code changes against the baseline and skips tests that don't depend on anything you changed.

A typical edit to a single pandas module will select a few hundred to a few thousand tests out of 170k, running in seconds instead of minutes.

Bootstrapping baselines from history

Selection only saves time once DAGZ has a baseline of what each test covers. The first pytest --dagz run builds it. To pre-populate baselines across recent commits (so a CI-style "every commit has a baseline" history exists from the start), use dagz git-replay inside the container:

dagz git-replay -n 20 --run --exclude-redundant \

--branch origin/main \

--pytest-args '-m "not db" --dagz-workers=6'

This walks the last 20 first-parent commits on origin/main oldest-first, runs git reset --hard on each, and runs pytest with --dagz so a baseline is recorded per commit. The first commit gets --dagz-no-baseline automatically; subsequent ones build on the previous baseline. --exclude-redundant lets later jobs skip tests that are already proven against the prior baseline.

--pytest-args is shell-tokenized with shlex and appended to every invocation; use it to scope what you replay. The flag accepts multiple instances; values accumulate.

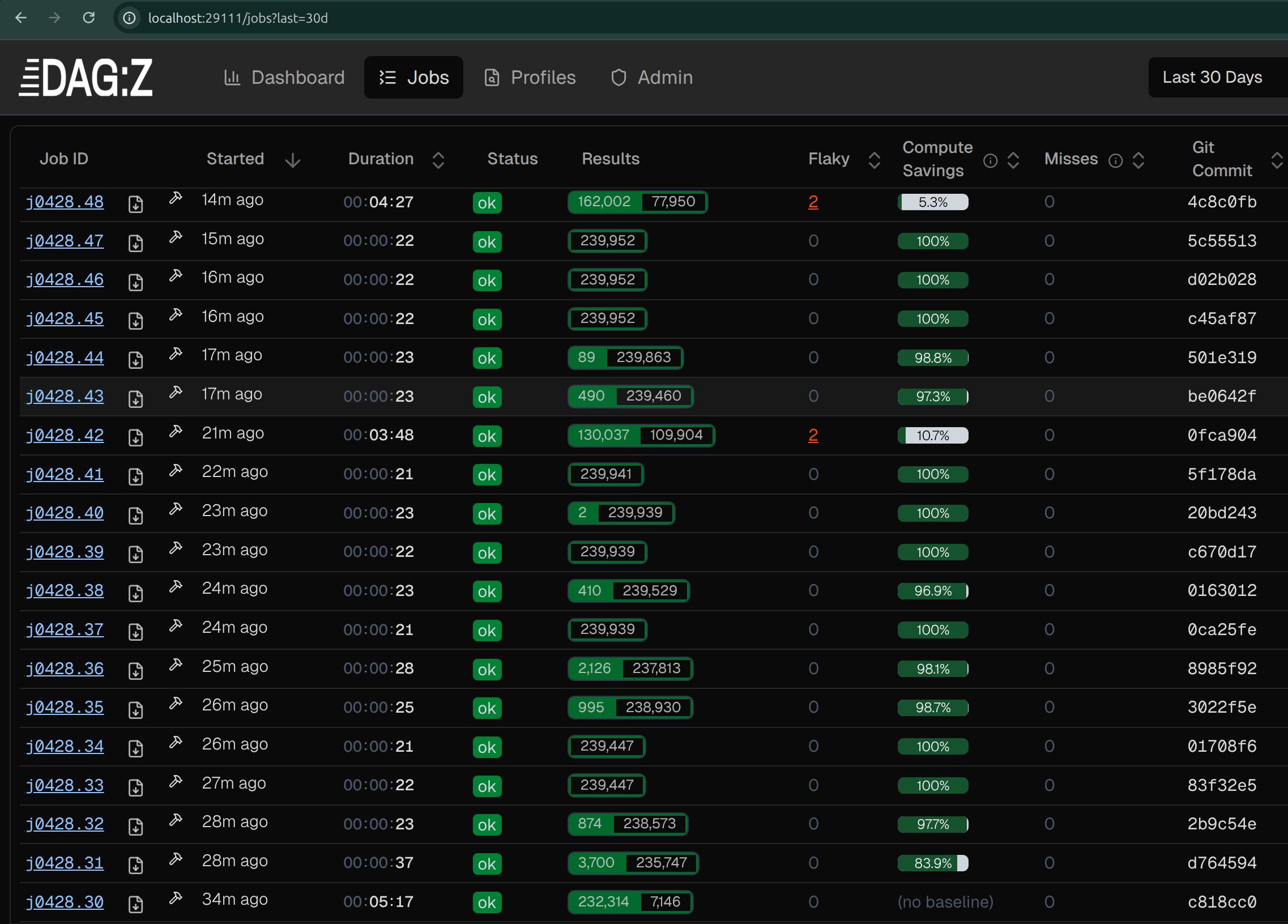

After the replay, the Jobs view shows one row per commit with duration, status, and compute savings:

The bottom row (j0428.30, "no baseline") is the bootstrap: full suite, 5:17 wall. Each later job runs only what its commit's diff actually hits. Commits that didn't touch Python finish in ~20s with 100% savings; commits with widely-dependent changes (5.3% and 10.7% in the screenshot) run for a few minutes.

Caveats:

git reset --hardon the bind-mounted checkout discards uncommitted changes. Stash or commit your work on the host first.- Pandas's meson-python editable install auto-rebuilds Cython on each reset;

/buildkeeps the artifacts off the host checkout. - Drop

--runfor a dry-run that just prints the commit list and diff stats.

Parallelizing DB tests

Read Parallelizing DB Tests first for the rerouting API and concepts. This section is the pandas-specific worked example.

Pandas's -m db slice connects to MySQL and Postgres via pymysql, psycopg2, and adbc_driver_postgresql.

By default these tests serialize on a single shared DB to avoid colliding on tables; with DAGZ rerouting, each worker gets its own per-worker copy of the database and the slice runs in parallel.

The image starts both databases on container entrypoint, so once the integration is wired into pandas's conftest, pytest -m db --dagz-workers=4 pandas/tests/io/test_sql.py works directly.

The whole integration is one file:

try:

from dagz.integ.psycopg import PG_CONFIG

from dagz.integ.pymysql import MYSQL_CONFIG

except ImportError:

def setup():

pass

else:

def setup_adbc_driver():

"""Custom ADBC driver rerouting using DAGZ rerouting framework."""

import adbc_driver_postgresql.dbapi

_orig_adbc_connect = adbc_driver_postgresql.dbapi.connect

def _override_connect(uri, *args, **kwargs):

uri = PG_CONFIG.maybe_reroute_uri(uri)

return _orig_adbc_connect(uri, *args, **kwargs)

adbc_driver_postgresql.dbapi.connect = _override_connect

def setup_parallel_db():

import dagz.integ.psycopg2

import dagz.integ.pymysql

PG_CONFIG.configure(

rewrite_db_name=PG_CONFIG.default_rewrite_db_name,

should_reroute=PG_CONFIG.default_should_reroute,

worker_init=dagz.integ.psycopg2.create_worker_init(["pandas"], host="127.0.0.1", port=5432, user="postgres", password="postgres"),

prepare=None,

)

MYSQL_CONFIG.configure(

rewrite_db_name=MYSQL_CONFIG.default_rewrite_db_name,

should_reroute=MYSQL_CONFIG.default_should_reroute,

worker_init=dagz.integ.pymysql.create_worker_init(["pandas"], host="127.0.0.1", port=3306, user="root", password=""),

prepare=None,

)

def setup():

setup_parallel_db()

setup_adbc_driver()

It calls into DAGZ's built-in psycopg2 and pymysql integrations for per-worker DB rerouting, and adds a small custom hook for adbc_driver_postgresql (which DAGZ doesn't ship a built-in integration for).

The try/except ImportError keeps setup() a no-op when DAGZ isn't installed, so a plain pytest invocation still works.

To wire it into pandas, place dagz_pandas_integ.py in the pandas checkout root and call setup() from a root conftest.py:

# conftest.py at the pandas repo root

import dagz_pandas_integ

dagz_pandas_integ.setup()

Benchmarks

Head-to-head with pytest-xdist

The numbers below run the pandas test suite (-m 'not db') with 6 workers on the same machine, comparing pytest-xdist and DAGZ both with and without coverage.

xdist (cov) uses pytest-cov's --cov; DAGZ (no cov) uses --dagz-disable-sensors.

All DAGZ runs include --dagz-no-bl to skip baseline lookup and test selection, isolating parallel runtime from selection-driven savings.

CPU cycles are summed across the P-core and E-core counters from perf stat.

DAGZ rows show the delta against the corresponding xdist row underneath each value.

| Configuration | Failed | Wall time | CPU time (user+sys) | CPU cycles (× 10¹²) | Peak memory |

|---|---|---|---|---|---|

| xdist (no cov) | 13 | 407.4s | 2232.3s | 9.06 | 14.5 GB |

| xdist (cov) | 3035 | 550.6s | 2829.8s | 9.30 | 14.2 GB |

| DAGZ (no cov) | 13 0% | 319.8s −22% | 1809.0s −19% | 7.13 −21% | 12.2 GB −16% |

| DAGZ (cov) | 13 −99.6% | 334.4s −39% | 1901.6s −33% | 7.60 −18% | 14.0 GB −1% |

With coverage on, pytest-cov adds 35% to xdist's wall time; DAGZ's own coverage instrumentation adds 5%. pytest-cov under xdist also pushes the run into 3,035 failures and errors (82 reported as failures, 2,953 as collection errors), well above the 13 the run produces without it. Savings from test selection apply on top of these numbers.

Measuring on your machine

Inside the container, pytest's wall time and dagz's own report are usually enough.

For deeper CPU and memory numbers, run pytest with the host's perf stat and a transient systemd scope (Linux host only):

systemd-run --user --scope --unit=pytest-run -p MemoryAccounting=yes -- \

bash -c 'env QT_QPA_PLATFORM=offscreen perf stat \

docker run --rm \

--env DAGZ_URL=http://host.docker.internal:29111 \

--add-host=host.docker.internal:host-gateway \

-v "${PWD}:/pandas" \

dagz-pandas-demo:latest \

pytest --dagz-no-bl --dagz-workers=6 pandas/tests/base ; \

systemctl --user status pytest-run'

QT_QPA_PLATFORM=offscreen keeps Qt-backed tests headless.

The trailing systemctl --user status pytest-run prints the scope's resource summary, including peak memory.

Swap pandas/tests/base for the slice you want to benchmark.

Notes

- pandas uses Cython (

.pyx/.pxd) for performance-critical code. DAGZ tracks these as binary modules; changes to Cython source files trigger re-selection of dependent tests. - The pandas test suite has a few tests that require optional dependencies (

qapp,httpserver). These will show as errors if the dependencies aren't installed; this is expected and unrelated to DAGZ.

Exporting coverage

After any pytest --dagz run on pandas, export a standard coverage report for the last job (run on the host):

zb export-cov --format pycoverage # → .coverage (coverage.py-compatible SQLite)

zb export-cov --format xml # → coverage.xml (Cobertura)

The .coverage file works with the usual tooling (coverage report, coverage html).

The Cobertura XML can be uploaded directly to Codecov, SonarQube, or GitLab's coverage_report artifact.

A note on accuracy for pandas's 170k-test suite:

- Semantic-unit coverage (the one DAGZ uses for selection, visible in the dashboard) is always up-to-date, even when you only ran a few thousand tests under selection. DAGZ folds the current run into the baseline.

- Line coverage (the shape

zb export-covproduces) reflects only what executed in the chosen job. For a full-suite line-coverage report, run pandas with selection disabled (e.g. on a scheduled full-run job) and export from that job with-j.

See Coverage → Two Modes of Coverage for the full explanation.

Measuring peak memory

Inside the container, cgroup v2 makes peak memory available without any extra packages:

# Read peak before

before=$(cat /sys/fs/cgroup/memory.peak)

pytest --dagz pandas/tests/

# Read peak after

after=$(cat /sys/fs/cgroup/memory.peak)

echo "Peak memory: $(( (after - before) / 1024 / 1024 )) MB"